A Continual Semantic Segmentation Method with Prototype Memory and Contrastive Distillation

Keywords:

Continual Semantic Segmentation; Prototype Memory; Contrastive Distillation; Catastrophic Forgetting; Medical Image AnalysisAbstract

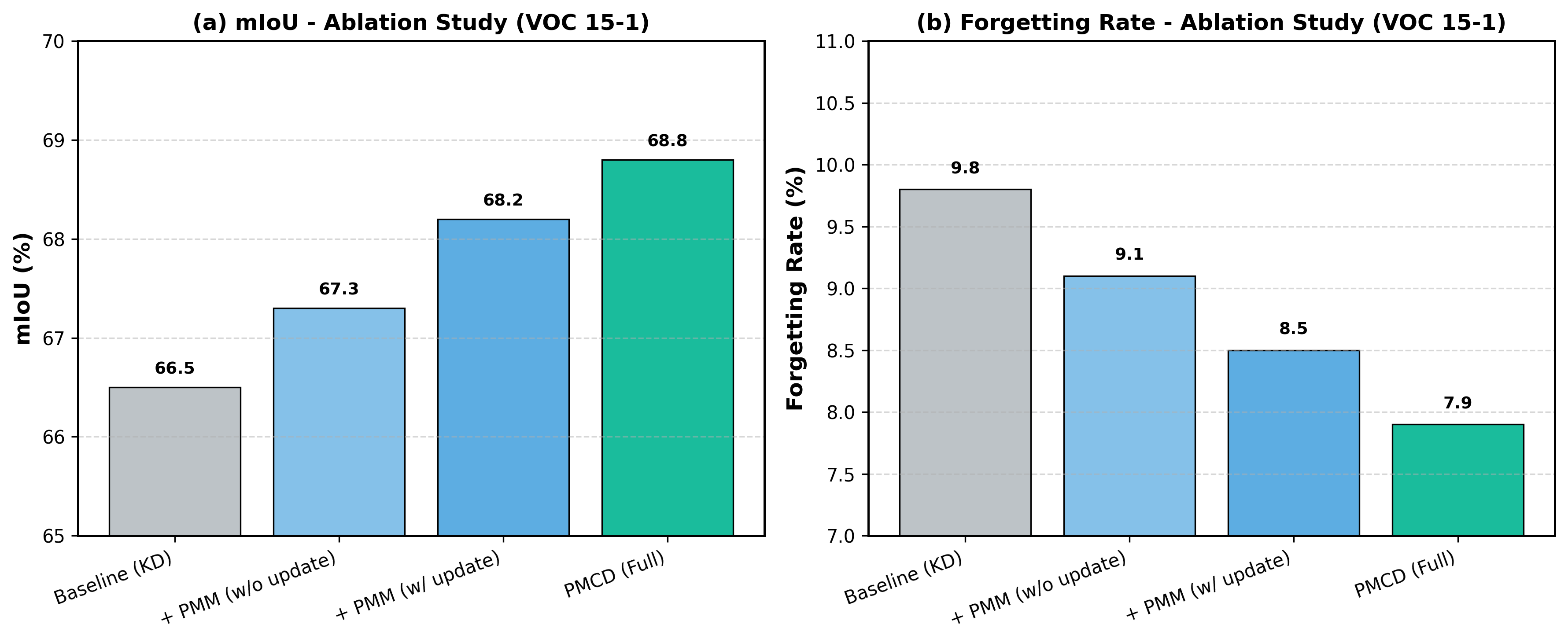

Deep learning has made impressive strides in semantic segmentation, but most existing models struggle with “catastrophic forgetting” when they are trained to recognize new classes over time. This issue becomes even more serious in privacy-sensitive areas such as medical image analysis, where retaining historical data is often not allowed. To tackle this problem, we introduce a new approach called Continual Semantic Segmentation with Prototype Memory and Contrastive Distillation (PMCD). Our method does not rely on storing example data. Instead, it builds a Prototype Memory Module that continuously updates and preserves a representative feature prototype for each class, capturing essential knowledge of previously learned categories without keeping any past images. At the same time, we develop a Contrastive Distillation Mechanism that integrates contrastive learning with knowledge distillation. This strategy encourages the updated model to maintain consistency with the previous model’s predictions for old classes in the feature space, while also improving the distinction between old and newly introduced classes. Experiments conducted on the public benchmarks PASCAL VOC 2012 and ADE20K show that PMCD surpasses current state-of-the-art methods across multiple class-incremental learning scenarios, delivering a 3–5% gain in mean Intersection over Union (mIoU) and significantly lowering the forgetting rate. Overall, this work offers an effective, exemplar-free solution to catastrophic forgetting in continual semantic segmentation and paves the way for broader adoption in privacy-critical fields such as medical imaging.